Table Of Contents

Code quality, best practices and standards are often the distinction between projects that are maintainable, secure and scale well, and projects that need to be rewritten every year. We were in the latter category unfortunately for quite a long time, despite everyone preaching best practices and within a group of quite smart individuals. The problem is we all had our own idea of what best practices to apply, what standards to follow and how we defined quality. We had to find a way to track and improve, then we discovered SonarQube.

SonarQube is a static code analysis tool.

It uses language-specific analyzers and rules to scan code for mistakes, some patterns that are known to introduce security vulnerabilities, and code smells [According to Wikipedia and Robert C. Martin “Code smell, also known as bad smell, in computer programming code, refers to any symptom in the source code of a program that possibly indicates a deeper problem.]

Our team’s experience with it

We started using SonarQube about 2 years ago, first we added our main project just to see what it looks like, analyzed it, and saw that it had a couple thousand bugs, some vulnerabilities, and a bunch of code smells. In all fairness, we had it poorly configured at the time, and the project was in the hundreds of thousands of lines of code.

We ignored it for a while after that, mostly because the priorities weren’t aligned with this and we had very limited capacity.

We slowly started looking at it in the next few months, and the first thing we found is that we had a whole bunch of javascript files .. in C# class library projects .. yea.. we were surprised too; apparently they’ve been there for a while due to someone creating the project with the wrong template.

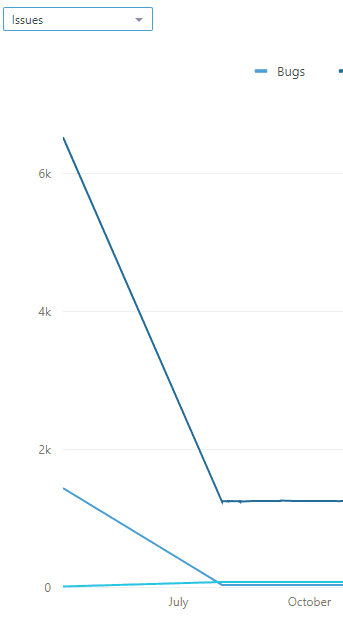

We cleaned all those, got rid of a bunch of other files that were just sitting around and not really used at all. Turned out we had quite a few vulnerabilities due to code we didn’t even know existed, mostly because it wasn’t really part of the project as far as we were aware. By the end of this cleanup (a couple days) our project got a lot lighter, and our vulnerabilities, bugs and code smells plummeted. [see image on the right]

We noticed that our project got faster, and most importantly, our engineers started aligning their code to this; not always, but it was a great start and it was encouraging. But this was our own little analyzer, and the business overall didn’t really care that we were doing this, because they didn’t understand why our code wasn’t already perfect :) We then added it as part of our code reviews, and we discovered a feature where the SonarQube analysis is performed only on the code we modified, and the findings are written directly into the pull request as comments. We finally then decided to start cleaning up our projects, but we really needed to get company buy-in to fully take advantage of it and have it as part of our standard operating procedure.

Now the hard part

Introducing such a tool in a company as large as diverse as ours seemed impossible to our management, luckily we didn’t have any management for a little while (more on this in a future post). Once we started asking around we also discovered that some teams were already using SonarQube themselves, while others either explored passively or had an interest in using it. The way we approached it is, we sent emails to every team we ever heard of and invited each team to assign 1 or 2 senior engineers from their team and forward the invite to any other teams they knew of, so we’d come together in a couple weeks on a global conference call to discuss forming the company’s first code standards and quality committee. Every team no matter how large or small was welcome, and any programming language they used was welcome. We set up the SonarQube server with the popular analyzers we knew teams to use, and opened it up. Email below is what really started it in case you’d like to try in your company:

Since most teams are already using SonarQube or are interested in using it, we’ve heard some questions about which rules we’d like to follow, which to ignore and how to best use Sonar as part of our process. The short answer is we need to decide.

We’d like to set up a screenshare between everyone on this chain, and go language by language and choose which rules to enable/disable or change the severity or type on, which plugins to add/remove, features to configure, etc.

The SonarQube server has been updated to the latest version and has the default rules enabled for each language. This server is available for everyone to use, and has now been integrated with our SSO server.

We also installed a few new plugins:

https://github.com/stevespringett/dependency-check-sonar-plugin

https://github.com/Coveros/zap-sonar-plugin

SonarPHP

OpenID Connect Authentication

CKS Slack Notifier

We recommend reviewing the rules at https://[sonar url]/coding_rules before the call, and analyzing your project. If you need any help doing so, feel free to reach out to me.

The first call was absolutely everything we had hoped for, and it was only the first of several to come. Everyone was in agreement that this was something that would benefit every team, and it was great to have. The default profiles were a good place to start. Of course there were fears as well, well articulated and justified. The most notable one was that some teams had a decade of continuous development invested in their project, and while it didn’t follow all the best practices, it worked, and it couldn’t be justified to rewrite the whole thing just to comply with today’s best practices. We discussed among ourselves, and we came out with the compromise that all new code will follow the new rules we decided on, and older code will just be brought up to standard in time as functionality is updated, but all new code had to have a passing grade of A in order to be released. This meant we were on a pretty incredible slope up in quality, standardization and all around agreed-on best practices with buy-in from everyone who was actually writing code.

We then drafted an SOP, got every team to pitch in anything they had to add, and now the rest is just another part of our standard process.

Next, we had to of course automate it.

Subscribe To My Newsletter

Quick Links

Legal Stuff