Table Of Contents

DevOps is great and an absolute necessity today, and everyone I talk with seems to agree with this, but few seem to agree on what devops engineers actually do. I think one of the most important things they bring to the industry is something along the lines of the process described below for taking code from the developers, and creating a full automated lifecycle release pipeline, that optimizes everyone’s time (from developers to product owners and stakeholders), while addressing every painstaking rigorous compliance, security and stability risk, along with the tools to support it in production (I’ll discuss what happens in production in a future article).

The process below is what works very well for the types of projects my team builds, but can apply to many other teams. I spent quite a bit of my free time over the last couple of years creating and optimizing this, and we have most of this already in place, but I came up with some ideas here and there which I describe below, which will now go on our to-do list, and hopefully start using in a few weeks.

Development process

Scrum team, planning work in 2 week increments, reacting fast to change, following best practices in research, planning, architecture and writing software. The team’s focus is on building great software, so we want them focused on what’s important.

- As a developer, I take on a new feature or bug that’s in the sprint and ready for development, and marks it as development in progress.

- Then I create a new branch off of develop, and do the work.

- Then commit one or more times, and mention #[Work Item Number] in the commit message, along with a description of what changed

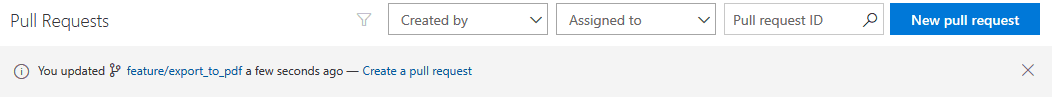

- Once I consider the work complete and “tested” locally on my computer, I’ll push the branch, go into TFS and click the shortcut to create a PR

* develop is locked, so all changes have to enter via Pull Requests

- On the next page I’ll make sure everything looks good, by doing one more check of the changes in the code, and click Create

- Done and move on to greater things

What happens behind the scenes

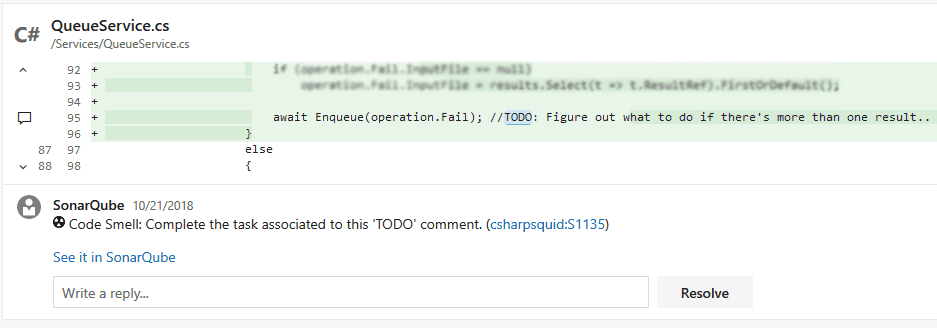

the linked work item statuses are updated to Review in Progress TFS Pull Request checks policies (these run anytime the branch or PR is changed in any way): tests if it can merge successfully into develop (the target branch). If it can’t, stops and right away alerts the person who created it, both in the PR and by email. This usually happens if develop has diverged from what you had and the files you changed also changed in develop. checks for linked work items, at least one linked work item is required (they are auto-linked if mentioned in a commit, and can be manually added to the PR anytime) requires at least 2 people on the dev team, outside of the person who created the PR to approve, out of which at least one has to be a senior developer. Votes are reset after any new change is pushed requires 1 person from the QA team to approve checks if every comment in the PR is resolved queues a build on the branch: our sonarqube rules are downloaded the build makes sure the code compiles runs our unit test suite (records test results and coverage) runs OWASP Dependency Check (see: https://www.owasp.org/index.php/OWASP_Dependency_Check) the code is analyzed using the SonarScanner for MSBuild the analysis + test results / code coverage reports + dependency check reports are uploaded to the SonarQube server the SonarQube server posts each issue it found back to the PR as comments

debugging symbols are uploaded to our Symbol Server (you can also upload them to a file share) a new container image is created from the build artifacts of the site a docker container is started with the new instance of the website, RavenDB, Redis and Elastic Stack (filebeat, elasticsearch, logstash and kibana) loaded with snapshot test data a small suite of browser automation smoke tests run against the environment to check for basic functionality the swagger json is downloaded from the newly deployed instance and compared with the one on develop, if different, the PR is updated to indicate it with a warning the link to the new site is linked to the Pull Request; and is a button click away from the reviewer actively testing the PR changes in a full environment

Debugging and digging for more info is why the dedicated elk stack is present and loaded up with all our filters and dashboards

Reviewing Pull Requests by Developers

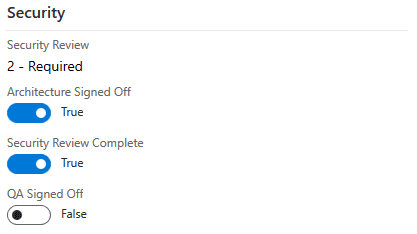

As a developer, throughout the day I’ll check if there are any Pull Requests I have that someone commented on, or if someone else has a Pull Request ready for my review. PRs are peer reviewed. I’ll open the pull request, read the quick description and linked work items to get an idea what it’ll be about; See if there are any other discussions going on and contribute if I can Look through the code, checking for best practices in architecture, security, coding style, and if it’ll work well with other features exiting in the system If one of the linked work items requires extra review, I’ll either do a Security Review or Architecture Review, and if it looks good, I’ll check the appropriate box on the PBI/work item

- Each review takes about 5-10 minutes usually

The critical role of QA

QA as a discipline and industry is probably as large as development itself in the activities they can perform, and everyone seems to have their own understanding of this role. While there are many sides to it, the following duties I believe are critical:

Exploratory testing - browsing the product and clicking on everything possible, and trying to break it by using it Test case creation step by step test cases, created based on the description of the Product Backlog Item / User Story, under the review of the product owner test cases created based on findings from exploratory testing low-level test cases created for specific areas of the code by looking at the code and trying to find logic boundaries workflow test cases, describing a full sequence of steps/events a user might do in order to accomplish something in the system Test case execution - a manual execution of test cases against the product, performed by someone separate from the person who wrote the test case Test case automation The development team (or QA automation development team) takes the test cases created by QA, and automates them so they can be run unattended and fast Browser automation tests for UIs using tools like Selenium Functional tests to be ran against APIs, including simulation of what any stakeholder’s application might be doing Smoke tests to quickly test a small number of critical system features Stress tests to identify the performance limits of the system

Nightly Instance

The nightly server allows stakeholders (including other teams/applications that interface with us) to see the latest version of the product and interact with it really early on. This also allows them to run their own tests against our site and to let us know if we broke anything

Once the Pull Request is completed, the code is in develop Once in develop, every night the latest version gets built similar to during the Pull Request build The next version of the product is computed and the newest commit is tagged with the version. The version follows the rules of Semantic Versioning (see https://semver.org/) for APIs; and for UI projects, it’s computed based on the backlog A larger suite of unit tests run against the binaries, building up the code coverage report Also analyzed with SonarQube and OWASP Dependency Check The build artifacts and symbols are uploaded back to TFS A release is created The agent on the Nightly server pulls the release The release has a step to configure the IIS Website and Application Pool in case anything changes The website is deployed via Web Deploy. In the case of windows services, the services are stopped, files are copied to their destination, then started back up A larger suite of functional, security, and browser automation tests are run against the instance (see https://scatteredcode.net/testing-concepts/ for more details on types of tests Swagger API documentation json is downloaded, and posted to our Confluence project’s Nightly API documentation page

Our team benefits from the TFS wiki per project, but we use Confluence for central documentation across every team Confluence allows people to subscribe to pages and receive a diff when the page changes. This is quite ideal for structured API documentation, the teams can usually tell quickly if something they depend on will break, and can ask us to pull the breaks on the release to allow them to prepare.

Preparing a release

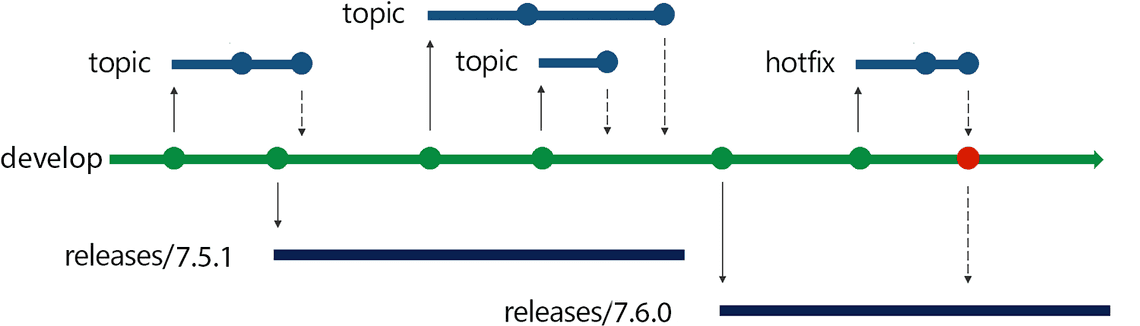

As we follow Microsoft’s Release Flow (see https://devblogs.microsoft.com/devops/release-flow-how-we-do-branching-on-the-vsts-team/) the steps are quite straightforward:

The product owner decides what they want to release. We pick a point along our develop branch and create a new branch with the version of the next release (which we get from the git tag), and push the branch

in the rare cases where we need to release everything EXCEPT that one thing, we branch right before it, then cherry pick the rest of the commits. This keeps our long running stable product branch clean, because release branches are only temporary.

What happens next (QA server)

A build is queued using the release branch The build has the same steps as the Pull Request / Nightly builds Our full suite of unit tests run against the binaries, creating the full code coverage report Also analyzed with SonarQube and OWASP Dependency Check The compiled code is virus scanned The build artifacts and symbols are uploaded back to TFS This is the last time this version is built, after it’s deployed to QA, it will be deployed to Staging, and finally Production without building again A release is created The agent on the QA servers pull the release The release has a step to configure the IIS Website and Application Pool in case anything changes The website is deployed via Web Deploy. In the case of windows services, the services are stopped, files are copied to their destination, then started back up Qualys SSL Labs scan is run against the site checking for a minimum Certificate Grade of A (security is dynamic), and for Certificate Expiration (see https://marketplace.visualstudio.com/items?itemName=kasunkodagoda.ssl-labs-test) The full suite of functional, security, and browser automation tests are run against the instance (see https://scatteredcode.net/testing-concepts/ for more details on types of tests) Our suite of ZAP penetration tests is queued up in our ZAP server (see https://www.owasp.org/index.php/OWASP_Zed_Attack_Proxy_Project) A Nessus Tenable scan is scheduled to run against the instance (see https://www.tenable.com/products/nessus/nessus-professional) Release notes are generated as Markdown from the linked Work Items, and posted to the project’s TFS Wiki Releases page (see https://scatteredcode.net/publishing-swagger-api-documentation-to-confluence-during-release/) The release notes contain the version, referenced work items, a list of commits and the commit messages, the results / summary of the sonarqube, test suites and security tests

QA Team Signoff on the Release

The QA exploratory and manual test case execution teams start working on validating the release All the information above is ready and available for their review

Moving to Staging

One click of a button, and the release is promoted to Staging

At this step, we’re one step from production, and it’s time to let everyone officially know this is happening The build artifacts get deployed to Staging the same way they were deployed to QA An SSL Labs scan is ran against the environment, along with another suite of verification and regression tests Swagger API documentation json is downloaded, and posted to our Confluence project’s Staging API documentation page

The Product Owner’s Signoff on the Release

Time to review everything

They set a date/time for the release to production The PO has the version number and release notes ready, and needs only to copy paste relevant information into an email for business and C-level users, maybe adjust the wording a bit

Release to Production

The release at this point has been reviewed so many times in so many ways, that this is the easy part. Even in cases where the product is deployed to 10 datacenters across 4 continents with 20+ servers in each location, and a desire for 0 downtime.

Each server has an agent on it, and the release pipeline is limited to a maximum of 2 servers at a time per region, to always have some available to process requests The servers are taken out of HA Proxy The site is deployed using Web Deploy locally A local suite of smoke tests run against the instance to make sure everything works The servers are set back to live in HA Proxy The next servers follow the same process until all the servers have the latest version An SSL Labs scan is ran against the environment for final checking Swagger API documentation json is downloaded, and posted to our Confluence project’s Production API documentation page

Subscribe To My Newsletter

Quick Links

Legal Stuff